The democratization of Artificial Intelligence

But once again, I was being naïve. The tools we use every day already depend on technology, and we’ve reached the moment where AI is starting to get involved in art and creativity.

Now, this didn’t suddenly appear in 2022. It’s been developing for years. The difference is that, recently, it’s become much more accessible to a huge audience thanks to image generation from nothing but descriptive text—or “prompts.”

Some best-known platforms are DALL·E and Midjourney, where you can type your ideas into words and, within seconds, get a range of images that match your description.

And depending on what you write, those images can be hyperrealistic or adapted to a specific artist’s style (and this is where authorship starts to get blurry, but that’s a reflection for another day). For example, here’s what I got after telling Midjourney to generate an image of a future Barcelona painted by Van Gogh:

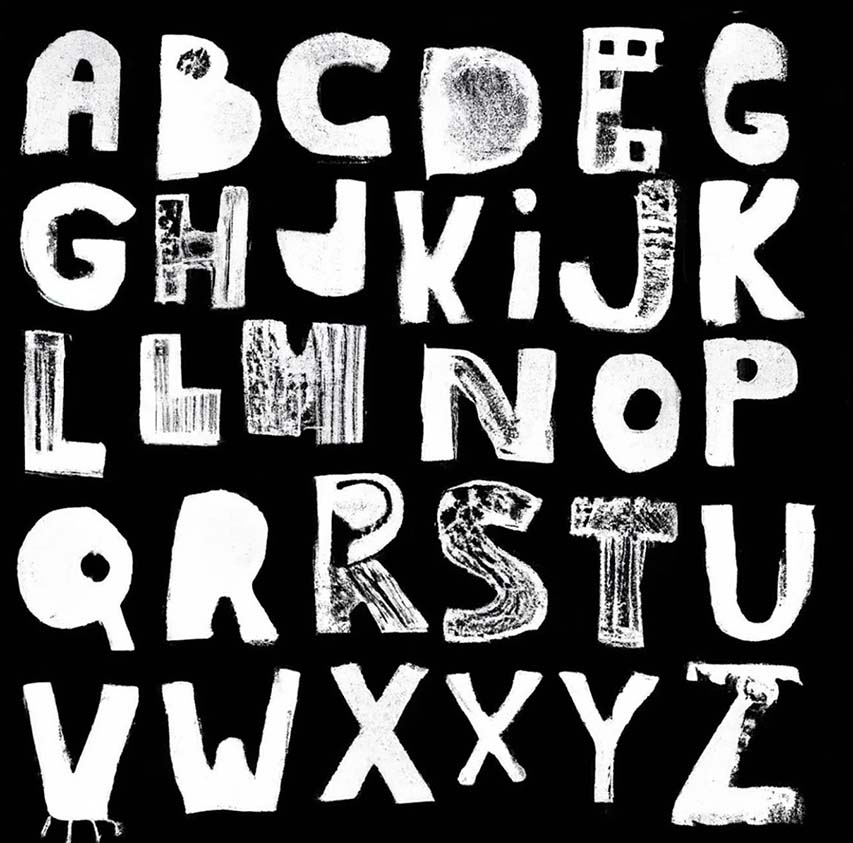

Or here’s another example: Luciano Fasan, a graphic and lettering designer, entered the prompt “Handmade primitive alphabet” into DALL·E.

And… motion graphics?

I didn’t want to be naïve all over again and assume that because I work with video and animation, this technology wouldn’t affect me. Because if you strip the definition of a video or animation down to its essence, you end up with this: sequenced images that create motion. And if AI can already generate images, then the next step is simply sequencing them to generate video.

So I started digging, and I discovered that people and companies are already testing these technologies and pushing them toward video generation. A first example is MyHeritage: with Deep Nostalgia, it brings faces to life and adds movement using only a photo. And then there’s EbSynth, an app that can apply a specific look —like an illustration style—, across an entire video.

Here’s a test I did. I got this result after investing only two hours of work (the first image is the original video, the second is the app’s raw export, and the third is with a few tweaks in After Effects):

But the most interesting part of all this is that, before we label it a threat to our professions —and I want to be clear that I understand where that feeling comes from—, I genuinely believe we can use it as just another tool and turn it into an advantage.

Maybe we treat it as a technique within a bigger idea, one more resource that helps us achieve the final result we’re after. That’s what director Karen X does. By combining different resources (including AI), she lands on outcomes that feel genuinely innovative and compelling.

Or maybe we use it when we need to pitch to sell an idea to a client, so the world we’re building becomes more visual and easier to communicate. Or we use it to expand our skills and explore new creative registers, like creative director Quentin Lengelé, who

managed to generate even a show’s opening credits using image generation.

What I’m really trying to say is that we live in a world that’s evolving at an insane pace, and we have to learn to adapt so we don’t become obsolete. For now, creativity and direction are still in our hands, which is why I’d encourage you to see this technology as a way to make creation easier, not as the thing that replaces us.

At the end of the day, just imagine if, back in the 1920s, someone had shown the golden-age Disney generation how to use After Effects. They probably would’ve made things even more impressive than what they achieved. Wouldn’t they?